In a talk at the School of Engineering and Applied Sciences on September 12, Gary King, Weatherhead University Professor and director of the Institute for Quantitative Social Science, spoke about what he called the “largest selective suppression of human expression in history”: the Chinese government’s censorship of social media.

“This is an enormous government program designed to suppress the free flow of information,” King said in a presentation entitled “Reverse-Engineering Chinese Censorship.” “But since it’s so large, it paradoxically conveys an enormous amount about itself, about what [the censors are] seeking, and about the intentions of the Chinese government. So it’s like an elephant sneaking through the room. It leaves big footprints.”

Scholars have long debated the motivations for censorship in China, but King said that his research offers an unambiguous answer: the Chinese government is not interested in stifling opinion, but in suppressing collective action. Words alone are permitted, no matter how critical and vitriolic. But mere mentions of collective action—of any large gathering not sponsored by the state, whether peaceful or in protest—are censored immediately. As he and his graduate students Jennifer Pan and Margaret Roberts wrote in a study published this May in the American Political Science Review, “The Chinese people are individually free but collectively in chains.”

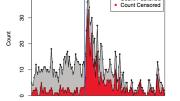

King’s conclusions were grounded in analyses of more than 11 million Chinese social media posts over the span of six months, using a system that allowed the researchers to access and download posts before they could be censored, and to check periodically on whether they were still available. The team used a set of keywords to collect posts in each of 85 topic areas, ranging from sensitive issues such as unrest in Inner Mongolia, or artist and dissident Ai Weiwei, to more innocuous ones like the World Cup and traffic in Beijing. For each topic, they could track the volume of posts and the proportion censored over time. In a second study, reported at the August annual meeting of the American Political Science Association, King’s team wrote more than 1,000 of their own posts and submitted them to Chinese social-media sites in order to test their hypotheses about which posts would be censored.

The result was a detailed view into the currents of Chinese social media. King and his colleagues observed that, like other social media all over the world, Chinese social media was “bursty,” with low-frequency chatter interrupted by occasional spikes of volume as certain topics went viral, usually in response to news events. At baseline levels of chatter, censorship in almost all topics was at a relatively low rate of 10 percent to 15 percent, typically occurring within the 24 hours after the posting. During some volume bursts, like the one following Ai’s sudden arrest in 2011, censorship would shoot up to 50 percent, or even more. But other spikes of activity, such as that following speculation of a reversal in the One Child Policy, provoked no such response, with censorship levels remaining the same even as the topic swept the nation’s social media. (King’s team found just two topics—pornography and criticism of the censors—that were subject to constant, high levels of censorship, irrespective of volume.)

Why did only certain topics provoke such strong censorship? King’s team found that sensitive topics tended to share a possibility for collective action. For instance, in the wake of the meltdown of Japan’s nuclear plant at Fukushima, a rumor circulated that iodine would help the effects of radiation poisoning, prompting a mad rush to buy salt in Zhejiang province. Even though that response had nothing to do with the government, King says the topic was heavily censored to forestall collective organization and action. In that vein, the research team studied censored posts in detail and found that posts with collective-action potential were equally (and highly) likely to be censored whether they supported or opposed government policy. As King told his audience, his findings suggest that all posts with collective-action potential are censored, whether they were exhortations to riot or citizens’ plans for a party to thank city officials. When the researchers wrote and submitted their own social media posts, they continued to find that threats of mobilization, not criticism alone, were what Chinese government censorship aimed to suppress.

In a final participatory step, the researchers set up their own social-media site in China. By contracting with Chinese software firms, they installed the same censorship technologies on their new site as those on existing sites, and they practiced writing and censoring their own posts to understand what censorship options were available. “The best part was, when we had a question, we called customer service, and they were terrific!” said King. “We just said, ‘How do we stay out of trouble with the Chinese government?’ And they said, ‘Let me tell ya!’”

Customer service gave the team a telling suggestion—to hire two or three censors for every 50,000 users—and were forthcoming about what technologies were most popular and helpful. He noted that the censorship process has some technical parallels to that used for social-network moderation in the United States to prevent child pornography or abusive language. Users’ posts might be targeted for review based on their previous posts, and certain content could be automatically sent for review if it contained certain keywords. But social media companies in China are largely able to choose their own censorship methods, King’s team observed, writing, “The government is (perhaps intentionally) promoting innovation and competition in the technologies of censorship.”

Keyword review has received widespread media attention, and Chinese users often adopt homophones (“river crab” to replace “harmony,” for instance) to get around online restrictions. But King’s team found that keyword review—which they call a “highly error-prone methodology”—played a relatively small role in censorship. Posts containing certain keywords like “masses,” “terror,” or “Xinjiang” were more likely to be filtered into automatic review before publication—a step King compared to purgatory—rather than published immediately and reviewed later, but the final decisions were left to manual censors.

The authors suggest that allowing online discussion and criticism may be “an effective governmental tool in learning how to satisfy, and ultimately mollify, the masses.” King speculated in his talk that online discussion could even be a useful tool for keeping tabs on the performance of local officials. “From this perspective,” the authors wrote, “the surprising empirical patterns we discover may well be a theoretically optimal strategy for a regime to use social media to maintain a hold on power.” What the Chinese government fears about social media, King summed up, is not criticism, but its ability to move people.