Istanbul, a city of 14 million people and a crossroads of cultural exchange dating back millennia, may also be where Turkey’s next major earthquake strikes. Cities along the North Anatolian Fault, which stretches from eastern Turkey to the Aegean Sea, have experienced an advancing series of strong quakes during the past 80 years, beginning in 1939 when a devastating 7.8-magnitude rupture leveled the city of Erzincan and killed 33,000 people. Most recently, in 1999, 7.4-magnitude quake near the city of İzmit left 17,000 dead and half a million homeless. A few months later, another shock hit Düzce, 60 miles away.

Brendan Meade, an applied computational scientist and associate professor of earth and planetary sciences, recently built a computer model of conditions in the North Anatolian Fault. His computer code—incorporating a massive amount of geodetic and seismic data, plus 2,000 years of recorded earthquake history—simulated how stresses from one earthquake propagate from the epicenter and can eventually trigger other quakes. It also sought to explain the tectonic motions, perturbations, and strains observed in the North Anatolian Fault in the years before and after the massive İzmit quake. Meade and three collaborators calculated that, along the entire fault system, the fastest estimated slip-deficit rates and the strongest viscoelastic stress transfers from previous quakes—both indicating higher earthquake risk—right now occur just 30 miles east of Istanbul.

Those calculations took 2.5 million central processing unit (CPU) hours to complete, and a supercomputer capable of running many CPU hours simultaneously. “We tried to make the code as efficient and fast as possible, and I think we did a good job,” Meade says. But still, “That’s a lot of CPU hours.” It would be useful, he and the others thought, to analyze other earthquake regions—the San Andreas Fault in California, the Kunlun in Tibet, the Alpine in New Zealand—but in order for that to be feasible, the calculation time would have to speed up. So, Meade says, “we asked a neural network to do the work for us.”

With two graduate students, he built a small artificial neural network—a machine-learning system inspired by the neurons and synapses in the human brain that process and pass along information—and trained it to reproduce the results of his computer code. Instead of 2.5 million CPU hours and a supercomputer, the computations took less than five hours. That’s a 55,000 percent acceleration, one that could run on a laptop. Meade and his colleagues published their findings this year in Geophysical Research Letters.

The catch is this: Meade doesn’t yet understand exactly how his neural network’s computations operate. In neural networks, this is often the case. But in that mystery, he says, lies the promise for science. “The neural network we built has come up with some representation of what our computer code does,” he explains. “But it doesn’t necessarily use the same functions that we’ve used,” ones with names and known mathematical properties. Instead, the neural network—which Meade trained using his trove of earthquake data and the end calculations his computer code yielded—can develop its own, much more computationally efficient, functions for getting from A to B. “In other words,” he says, “neural networks may be a tool for discovering functions that we haven’t gotten around to identifying and naming yet.” By “interrogating” the network after the fact, scientists can pick apart those nameless functions and learn from them. “This is an incredible tool for discovery,” Meade says. The goal is to start mapping the links between classical mathematics and as-yet undeciphered functions he and others are learning from neural networks: “Essentially, to create a Rosetta Stone.”

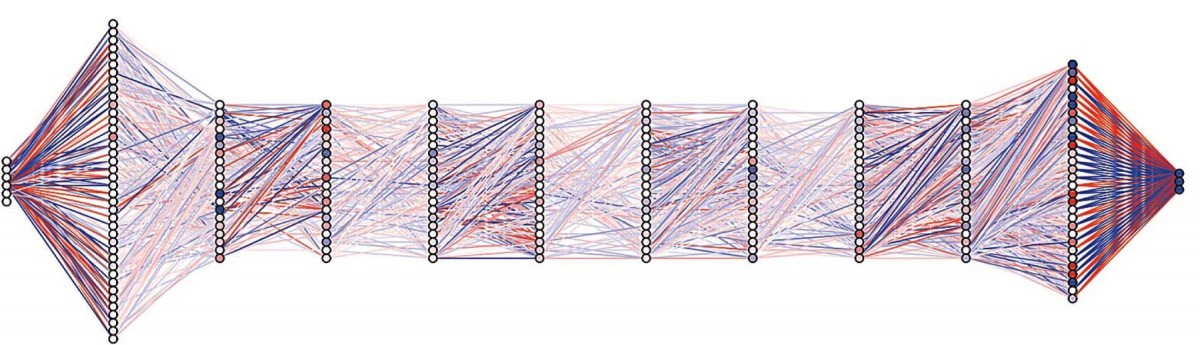

A visualization of Meade's neural network, showing how individual neurons (circles) are connected (colored lines) to facilitate complex computations.

Image courtesy of Brendan Meade

But the promise is more than just faster ways of completing calculations that scientists already understand; neural networks, he believes, may help decode complex natural systems whose underlying dynamics right now seem too messy or irregular for scientists to model effectively. Biology abounds with these conundrums, Meade says. So do the earth sciences. “When you think about fields that maybe aren’t as ‘clean’ as classical physics—the interaction between different parts of the environment, or within climate systems, or how the function of tectonic plates leads to earthquakes and builds mountains—we have lots of observations and data about these things,” but little ability to accurately predict how altering one element in a system of millions or billions might change a single output. Neural networks offer the prospect of making those predictions, Meade says, “even if we don’t understand the entire system at first.” That’s because, by processing data much faster and more efficiently than is otherwise possible, neural networks can spot previously invisible patterns and trends that current scientific models have had no way to identify and represent. “That’s truly the deep power of neural networks and artificial intelligence,” he says. “We have a tool now that can suggest new ideas to us. When we don’t know what to do, neural networks can give clues to a way forward.”

And Meade’s next step forward? He has recently turned his attention to a growing number of studies that attempt to identify precursors to earthquakes. Although scientists can tell with some accuracy where earthquakes are most likely to happen, it remains notoriously impossible to predict when one will strike. The studies conducted during the past decade have been confined to single earthquakes and small collections of isolated data, Meade says, but he wonders if neural networks might help scientists uncover broad and robust correlations that might help a city like Istanbul forecast and prepare for a quake. “The question now,” he says, “is whether we can assemble data sets that are large enough and coherent enough that we can go after the earthquake-prediction problem in earnest.”